The 1976 Olympic Games, held in Montreal over a two-week period in July, represented the absolute pinnacle of athletic competition. Caitlyn (then Bruce) Jenner proved to be the most impressive decathlete in the world; at 14, Romanian Nadia Comaneci earned a perfect 10 score on the uneven bars.

Just three months later, Jenner would be present—this time as an eyewitness—to a multi-discipline competition that was no less compelling, despite the fact that some of its participants were prone to smoking between events. That was the year ABC broadcast the inaugural edition of Battle of the Network Stars, a competition pitting small-screen talent from the three major networks against one another in relay races, kayaking, swimming, golf, and tug of war.

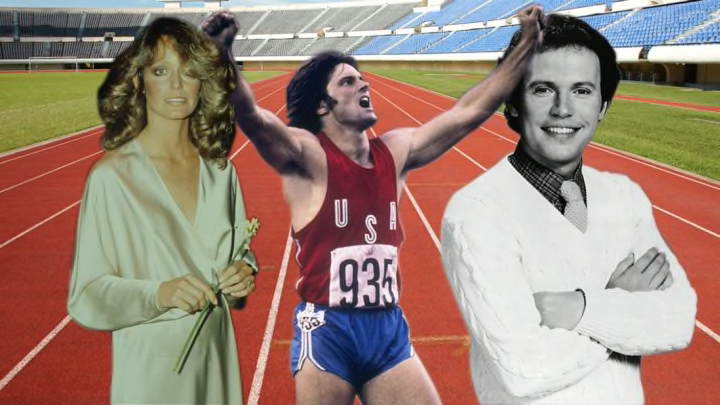

At any given time during the show’s semi-annual airings, viewers could expect to see Gabe Kaplan, Tony Danza, Farrah Fawcett-Majors, O.J. Simpson, Billy Crystal, Michael J. Fox, Ron Howard, Tom Selleck, Scott Baio, and other TV Guide cover subjects making very earnest attempts to outdo one another. While ABC’s motivation was clearly ratings, and viewers were compelled by both male and female stars sporting gym shorts, the participants were recruited based on a dual reward tier: Their egos would be challenged, and they could win a lot of money.

Battle’s origins can be traced back to the NBA—specifically, a lack of it. In the mid-1970s, ABC had lost the rights to broadcast National Basketball Association games to CBS, creating a hole in the network's Sunday afternoon programming schedule. An ABC executive named Dick Button proposed a show called Superstars, where well-known athletes would step outside of their comfort zones and try out a new sport.

ABC was elated when Superstars wound up outdrawing CBS’s NBA games in the ratings. The logical progression, according to former ABC executive Don Ohlmeyer, was to use the Superstars format and take advantage of the deep bench of attractive primetime stars appearing on television at the time. In an unlikely bit of collusion, ABC convinced both CBS and NBC to allow their contracted talent to appear on Battle of the Network Stars on the premise that it would amount to free advertising during a rival channel’s airtime.

The three network squads were a who’s-who of ‘70s fame. For ABC, team captain Gabe Kaplan (Welcome Back Kotter) led a charge that included Lynda Carter, Ron Howard, and Penny Marshall; NBC’s crew was comprised of captain Robert Conrad, Tim Matheson, Melissa Sue Anderson, and Ben Murphy; CBS appointed Telly Savalas to manage Lee Meriwether, Jimmie Walker, and Mackenzie Phillips.

Conrad would later recall that recruiting for the shows was easy, since “actors have tremendous egos” and took the competition seriously. An additional incentive was the fact that each member of the winning team would receive $20,000. (The amount would eventually go up to $40,000 as the series wound down in the 1980s.)

Despite the overall sheen of ironic detachment from commentator Howard Cosell, former Wild, Wild West star Conrad was fiercely competitive. Onetime contestant Melissa Gilbert recalled that Conrad once sent a kayak instructor and kayak to her house so she could practice for the event in her pool. During a relay race, when judges determined NBC had committed a foul, Conrad angrily demanded to face team captain Kaplan in a “run-off” to determine a winner. (Savalas, whose CBS team was destined for third place regardless, puffed on a cigarette and looked on with amusement.) Kaplan overcame an early deficit to surpass Conrad in a 100-meter foot race.

To Ohlmeyer, Conrad’s genuine outrage at the accusation of a foul helped set the tone for the specials, which didn’t appear to soften the events for the amateur competitors. Bikes were mounted without helmets or knee pads; Gilbert recalled seeing broken bones, sprained ankles, and contestants passing out from the heat; Falcon Crest star Lorenzo Lamas once took a spill off a cliff during a bike race, and landed in a ditch.

Several competitors had athletic backgrounds. Tony Danza was a former professional boxer; Mark Harmon was a quarterback at UCLA; Kurt Russell played minor league baseball. But an athletic background was no prerequisite: ABC was under no delusion about why many viewers were tuning in. Men like Lamas and Tom Selleck were of significant interest to audiences once they had disposed of their shirts, while the sight of a jogging Carter or Fawcett-Majors appealed to another demographic. “Giggly, jiggly starlets” is how Detroit Free Press columnist Mike Duffy described the action of the 1980 special, chiding producers for the shamelessness of dangling Dallas star Charlene Tilton over a dunk tank.

With a rotating cast, Battle taped most of its events at Pepperdine University in Malibu, California, airing twice a year through 1985. Devoted viewers would eventually be treated to the surreal spectacle of Tony Randall or William Shatner leading a sports team or David Letterman paddling shirtless in a kayak while Dick Van Dyke commentated the action. During one climactic tug of war, Conrad recalled that the teams spent over 14 minutes locked in a stalemate.

It seemed viewers would never tire of such high drama, but Battle's novelty eventually wore thin. The 1985 season was its last, with brief revivals attempted in 1988 and 2003. More recently, ABC announced a reboot scheduled for June 2017 that will feature many of the show's previous participants: Lorenzo Lamas, Erik Estrada, Jimmie Walker, and Mackenzie Phillips will all be there. It might be diverting and it might not, but the sight of a celebratory Lynda Carter kissing Gabe Kaplan while Telly Savalas moodily drags on his cigarette is a scene unlikely to ever be matched.