For 60 years, American drivers unknowingly poisoned themselves by pumping leaded gasoline into their tanks. Here is the lifelong saga of Clair Patterson—a scientist who helped build the atomic bomb and discovered the true age of the Earth—and how he took on a billion-dollar industry to save humanity from itself.

Walter Dymock didn’t mean to jump out his second-story bedroom window.

He was queasy, not out of his mind. But on a mild October night in 1923, shortly after Dymock groggily tucked himself into bed, something within him snapped. Like a man possessed, Dymock rose, fumbled through the dark, opened his window, and leapt into his garden.

Hours later, a passerby discovered him lying in the dirt, still breathing. He was hurried to a hospital.

Dymock wasn’t alone. Many of his coworkers were acting erratically too. Take William McSweeney. One night that same week, he had arrived home feeling ill. By sunrise, he was thrashing at phantoms. His family rang the police for help—it would take four men to wrap him in a straitjacket. He’d join his co-worker William Kresge, who had mysteriously lost 22 pounds in four weeks, in the hospital.

A few miles away, Herbert Fuson was also losing his grip on reality. He'd be restrained in a straitjacket, too. The most troubling case, however, belonged to Ernest Oelgert. He had complained of delirium at work and was gripped by tremors and terrifying hallucinations. “Three coming at me at once!” he shrieked. But no one was there.

One day later, Oelgert was dead. Doctors examining his body observed strange beads of gas foaming from his tissue. The bubbles "continued to escape for hours after his death."

“ODD GAS KILLS ONE, MAKES FOUR INSANE,” screamed The New York Times. The headlines kept coming as, one by one, the four other men died. Within a week, area hospitals held 36 more patients with similar symptoms.

All 41 patients shared one thing in common: They worked at an experimental refinery in Bayway, New Jersey, that produced tetraethyl lead, a gasoline additive that boosted the power of automobile engines. Their workplace, operated by Standard Oil of New Jersey, had a reputation for altering people’s minds. Factory laborers joked about working in a “loony gas building.” When men were assigned to the tetraethyl lead floor, they'd tease each other with mock-solemn farewells and "undertaker jokes."

They didn’t know that workers at another tetraethyl lead plant in Dayton, Ohio, had also gone mad. The Ohioans reported feeling insects wriggle over their skin. One said he saw “wallpaper converted into swarms of moving flies.” At least two people died there as well, and more than 60 others fell ill, but the newspapers never caught wind of it.

This time, the press pounced. Papers mused over what made the “loony gas” so deadly. One doctor postulated that the human body converts tetraethyl lead into alcohol, resulting in an overdose. An official for Standard Oil maintained the gas’s innocence: “These men probably went insane because they worked too hard,” he said.

One expert, however, saw past the speculation and spin. Brigadier General Amos O. Fries, the Chief of the Army Chemical Warfare Service, knew all about tetraethyl lead. The military had shortlisted it for gas warfare, he told the Times. The killer was obvious—it was the lead.

Meanwhile, a thousand miles west, on the prairies and farms of central Iowa, a 2-year-old boy named Clair Patterson played. His boyhood would go on to be like something out of Tom Sawyer. There were no cars in town. Only a hundred kids attended his school. A regular weekend entailed gallivanting into the woods with friends, with no adult supervision, to fish, hunt squirrels, and camp along the Skunk River. His adventures stoked a curiosity about the natural world, a curiosity his mother fed by one day buying him a chemistry set. Patterson began mixing chemicals in his basement. He started reading his uncle’s chemistry textbook. By eighth grade, he was schooling his science teachers.

During these years, Patterson nurtured a passion for science that would ultimately link his fate with the deaths of the five men in New Jersey. Luckily for the world, the child who’d freely roamed the Iowa woods remained equally content to blaze his own path as an adult. Patterson would save our oceans, our air, and our minds from the brink of what is arguably the largest mass poisoning in human history.

The tragedy began at the factories in Bayway, New Jersey. It would take Clair Patterson’s whole life to stop it.

In 1944, American scientists raced to finish the atomic bomb. Patterson, then in his mid-twenties and armed with a master’s degree in chemistry, counted himself among the many young scientists assigned to a secret nuclear production facility in Oak Ridge, Tennessee.

Tall, lanky, and sporting a tight crew cut, Patterson was a chemistry wunderkind who had earned his master’s in just nine months. His talents in the lab convinced an army draft board to deny him entry into the military: His battlefield, they insisted, would be the laboratory; his weapon, the mass spectrometer.

A mass spectrometer is like an atomic sorting machine. It separates isotopes, atoms with a unique number of neutrons. (An isotope of uranium, for example, always contains 92 protons, 92 electrons, and a varying population of neutrons. Uranium-235 has 143 neutrons. Its cousin, uranium-238, has three more.) A mass spectrometer is sensitive enough to tell the difference. Patterson's job was to separate them.

“You see the isotope of uranium that [the military] wanted was uranium-235, which is what they made the nuclear bomb out of,” Patterson told historian Shirley Cohen in a 1995 interview [PDF]. “But 99.9 percent of the original uranium was uranium-238, and you couldn't make a bomb out of that … [Y]ou could separate them using a mass spectrometer."

The machines in Oak Ridge consumed the room. The magnets were "like a football track," Patterson recalled. "They had little collection boxes ... So you could take a bunch of this stuff and put it in, and then when you got it out, you had the enriched 235 over in one box."

In August 1945, the United States dropped some of that enriched uranium on Hiroshima and Nagasaki, killing upwards of 105,000 people. Six days after a mushroom cloud swallowed Nagasaki, Japan surrendered. Patterson was horrified.

After the war, he returned to civilian life as a chemistry Ph.D. student at the University of Chicago. He’d continue working with mass spectrometers, but no longer would he use the technology to edge the planet closer to the End Times. Instead, he’d use it to discover the Beginning of Time.

The age of Earth has invited speculation for millennia. In the 3rd century, Julius Africanus, a Libyan pagan-turned-Christian, compiled Hebrew, Greek, Egyptian, and Persian texts to write one of the first chronologies of world history by tallying the lifespans of Biblical patriarchs such as Adam (a ripe 930 years) and Abraham (a measly 175 years) and matching them with historical events. Africanus concluded the Earth was around 5720 years old, an estimate that stuck in the west for 15 centuries.

The first glimmers of The Enlightenment shattered that number, which eventually bloated from the thousands, to the millions, to the billions. By the time Patterson stepped onto the Chicago campus, scientists pegged the Earth’s age at 3.3 billion years. However, an aura of mystery and uncertainty still surrounded the number.

After years of working on military projects, researchers at the University of Chicago were itching to do science for science’s sake again. The university accommodated science’s most celebrated minds: Willard Libby, the pioneer of carbon dating; Harold Urey, who’d later jolt our understanding of life’s origins; and Harrison Brown, Patterson’s advisor. Brown was no slouch himself. A nuclear chemist with an appetite for Big Questions, he enjoyed “cantilevering out into the lonely voids of protoknowledge,” Patterson recalled. He liked dragging his grad students out there with him.

For one, Brown pondered new uses for uranium isotopes. Over time, these isotopes disintegrate into atoms of lead. The process—radioactive decay—takes millions of years, but it always occurs at a constant rate (703 million years for half of a uranium-235 isotope; 4.5 billion years for half of uranium-238). Uranium isotopes are basically atomic timepieces. Brown knew if somebody uncracked the ratio of uranium to lead inside an old rock, he could learn its age.

That included Earth itself.

Brown worked out a mathematical equation to nail the age of the Earth, but, to solve it, he needed to analyze rock samples 1000 times smaller than anybody had ever measured before. Brown needed a protégé, somebody experienced tinkering with a mass spectrometer and uranium, to make it happen. One day, he summoned Patterson into his office.

“What we’re going to do is learn how to measure the geologic ages of a common mineral that’s about the size of a head of a pin,” Brown explained. “You measure its isotopic composition and stick it into the equation … And you’ll be famous, because you will have measured the age of the Earth.”

Patterson mulled it over. “Good, I will do that.”

Brown smiled. “It will be duck soup, Patterson.”

Harrison Brown, let's just say, had a habit of stretching the truth: Solving one of mankind’s oldest questions was not remotely "duck soup." Patterson joined another graduate student, George Tilton, and together they analyzed rocks with a known age as a test run. Wanting to ensure that Brown’s formula—and their methods—were correct, the duo started each experiment with the same routine. First they'd crush granite, then Tilton would measure the uranium as Patterson handled the lead.

But the numbers always came out goofy. “We knew what the amount of lead should be, because we knew the age of the rock from which it came,” Patterson said. But the data was in the stratosphere.

A lightbulb moment rescued them when Tilton realized that the lab itself might be contaminating their samples. Uranium had been tested there previously, and perhaps tiny traces of the element lingered in the air, skewing their data. Tilton moved to a virgin lab, and when he tried again, his numbers emerged spotless.

Patterson figured he had the same problem. He tried to remove lead contamination from his samples. He scrubbed his glassware. Too much lead. He used distilled water. Too much lead. He even tested blank samples that, to his knowledge, contained no lead at all.

Lead still showed up.

“There was lead there that didn’t belong there,” Patterson recalled. “More than there was supposed to be. Where did it come from?”

It started as an attempt to save lives. In 1908, a woman’s car stalled on a bridge in Detroit, Michigan. In those days, cars didn’t sputter awake with a twist of the key. Drivers needed to step out and crank the engine by hand. So when a good Samaritan saw the woman stranded, he kindly offered to help. As he wound the crank, the engine kicked alive, and the crank cracked him in the jaw—shattering it. Days later, he died.

The man’s name was Byron Carter, a prominent car manufacturer and a personal friend of Cadillac’s founder, Henry M. Leland.

Distraught, Leland committed his company to building a safer, crankless car. He called upon the inventor Charles Kettering to invent the 1912 Cadillac, which would boast four sleek cylinders, a top speed of 45 mph, a newly invented automatic starter ... and a deafening engine. The car clanged and banged, pinged and clacked. When it chugged up hills, it might as well have been performing Verdi’s "Anvil Chorus." The crankless car had a new problem: engine knock.

When pockets of air and fuel prematurely explode inside an internal combustion engine, you’ll hear a boisterous ping that not only torpedoes your eardrums, but also prevents the engine from operating at full tilt. That’s engine knock. With the Ford Model-T walloping Cadillac in sales, Kettering was hell-bent on stopping it.

In 1916, Kettering melded minds with a young scientist named Thomas Midgley Jr., and the two assembled a team to search for a gasoline additive to silence the racket. They added hundreds (possibly thousands) of substances to the gas, with little luck. Even Henry Ford chipped in, supplying a concoction he dubbed “H. Ford’s Knock-knocker.” (Test results returned with a resounding “meh.”)

In 1921, a breakthrough came in the name of tellurium, an element that reduced knock and—as historian Joseph C. Robert describes in his book Ethyl—smelled like Satan’s gym locker. “There was no getting rid of it,” Midgley said. “It was so powerful that a change of clothes and a bath at the end of the day did not reduce your ability as a tellurium broadcasting station.” The smell was so noxious that Midgley’s wife banished him to sleep in the basement for seven months. When Chevrolet built a test car running on tellurium fuel, engineers nicknamed the automobile “The Goat,” partly because it climbed mountains like magic, and partly because the exhaust spat out a perfume reminiscent of a ruminant’s posterior.

The search continued until December 9, 1921, when Midgley’s team poured tetraethyl lead into an engine sloshing with kerosene.

The knock was silenced. The engine purred. The scientists rejoiced.

Leaded gasoline promised everything Kettering and Midgley hoped for. It was plentiful. It was cheap. It didn’t smell. The group marketed the product as “Ethyl” gasoline—deliberately omitting any mention of the word lead—and General Motors and Standard Oil of New Jersey kickstarted a new company, the Ethyl Corporation, to produce it.

In February 1923, a gas station attendant in Dayton, Ohio, ladled a teaspoon of tetraethyl lead into a vehicle’s tank, recording the first sale of leaded gasoline. Months later, a handful of racecar drivers competing in the Indianapolis 500 tried leaded gasoline and took first, second, and third place. Word spread that a miracle liquid made car engines stronger, faster, and quieter.

As the gas hit the market and excitement mounted, Midgley retreated to Florida.

He was sick. His body temperature kept dipping. “I must overcome this slight error or I shall soon be classified as a cold-blooded reptile,” he joked to a colleague. He hoped a few weeks of golfing in warmer climes would solve the problem, but when he returned home a month later, his body still couldn’t keep a normal temperature. It was lead poisoning.

Lead makes humans sick because the body confuses it with calcium. The most abundant mineral in the human body, calcium helps oversee blood pressure, blood vessel function, muscle contractions, and cell growth. As the milk cartons boast, it keeps bones strong. In the brain, calcium ions bounce between neurons to help keep the synapses firing. But when the body absorbs lead, the toxic metal swoops in, replaces calcium, and starts doing these jobs terribly—if at all.

The consequences can be terrifying. Lead interferes with the body’s battalion of antioxidants, damaging DNA and killing neurons. Neurotransmitters, the chemical paperboys of the brain, stop delivering messages and start murdering nerve cells. Lead inhibits the brain’s development by stonewalling the process of synapse pruning, heightening the risk of learning disabilities. It also weakens the blood-brain barrier, a protective liner in your skull that blocks microscopic villains from infiltrating the brain, the result of which can lower IQs and even cause death. Lead poisoning is rarely caught in time. The heavy metal debilitates the mind so slowly that any impairment usually goes unnoticed until it’s too late.

Poisoning from pure tetraethyl lead, however, works differently. It moves quickly. Just a few teaspoons directly applied to the skin can kill. After soaking the dermis, it leaches into the brain, and, within weeks, causes symptoms similar to rabies: hallucinations, tremors, disorientation, and death. It’s not a miracle motor drug. It’s concentrated poison.

Midgley would recover, but the same could not be said for his employees. During the spring of 1924, two workers in Dayton, Ohio, died under his watch. Dozens more went insane. Midgley knew the men and, freighted with guilt, sank into depression and pondered removing leaded gasoline from the market. Kettering coaxed him out of it. Instead, he hired a young man named Robert Kehoe to make the toxin safer in factories.

Whip-smart and reticent, Kehoe was a young assistant professor of pathology at the University of Cincinnati. The new gig would change his life. He’d rise to become the singular medical authority on, and scientific spokesman for, the safety of leaded gasoline. He’d supervise a research laboratory that received bottomless funding from a web of corporations such as GM, DuPont, and Ethyl.

Kehoe’s first assignment was to investigate the Dayton deaths. He met about 20 injured workers and concluded that heavy lead fumes had sunk to the factory floor and poisoned the men. Don’t abandon tetraethyl lead, Kehoe advised. Just install fans in the factory.

With that, business resumed. Then came the tragedy at Bayway, New Jersey.

Five men dead and dozens more clinging to reality. That’s how New York’s yellow press painted the scene. A Yale physiology professor named Yandell Henderson took to the media to skewer tetraethyl lead producers, telling The New York Times the product was “one of the greatest menaces to life, health and reason.” Henderson had studied the risks during World War I. “This is one of the most dangerous things in the country today,” he told the Times. Henderson went as far as to say that if he had a choice between tuberculosis and lead poisoning, he’d choose tuberculosis.

Henderson worried about car exhaust. Tailpipes burped lead dust into the air pedestrians and residents breathed. Every 200 gallons of gas emitted a pound of toxins into the air. In an interview, Henderson prophesied that, “It seems more likely that the conditions will grow worse so gradually and the development of lead poisoning will come on so insidiously (for this is the nature of the disease) that leaded gasoline will be in nearly universal use and large numbers of cars will have been sold that can run only on that fuel before the public and the government awaken to the situation.”

Standard Oil’s response: “We are not taking Dr. Henderson’s statement seriously.” The alarmism, a representative said, was “bunk.” The industry claimed it had the issue all figured out. It had commissioned a study that exposed 100 pigs, rabbits, guinea pigs, dogs, and monkeys to leaded engine fumes every day for eight months. No signs of lead poisoning were found. (A dog did have five puppies.)

The study was flawed. As journalist Sharon Bertsch McGrayne writes in Prometheans in the Lab, “the Ethyl Corporation also demanded and was given a veto over the study’s content and publication.” Any troubling results, if they existed, could have been silenced.

In May 1925, the Surgeon General called a conference in Washington, D.C. to discuss the controversy. As a PR precaution, the Ethyl Corporation suspended sales of leaded gasoline and held its breath. The company’s team, spearheaded by Kehoe, prepared a defense that argued against a ban: Lead companies simply had to make factories safer for their workers.

Months later, a committee appeared to agree. It determined there were “no good grounds for prohibiting the use of Ethyl gasoline.” Ethyl resumed sales. Signs hanging above roadside service stations in 1926 rang in the news: “ETHYL IS BACK.”

The feds gave lip service to critics like Henderson, advocating that independent researchers should continue investigating leaded gasoline. But it never happened. In fact, independent researchers failed to study leaded gasoline for the next four decades.

For 40-plus years, the safety of leaded gasoline was studied almost entirely by Kehoe and his assistants. That entire time, Kehoe’s research on tetraethyl lead was funded, reviewed, and approved by the companies making it.

Kehoe and the Ethyl Corporation would maintain this monopoly until Clair Patterson, scratching his head in a Chicago laboratory, wondered why so much lead was fouling his beloved rocks.

Patterson analyzed each step of his procedure, from start to finish, to pinpoint the lead’s origins. “I found out there was lead coming from here, there was lead coming from there; there was lead in everything that I was using...” he later said. “It was contamination of every conceivable source that people had never thought about before.”

Lead came from his glassware, his tap water, the paint on the laboratory walls, the desks, the dust in the air, his skin, his clothes, his hair, even motes of wayward dandruff. If Patterson wanted to get accurate results, he had little choice but to become the world’s most obsessive neat freak.

As journalist Lydia Denworth describes in her book, Toxic Truth, Patterson went to enormous lengths to rid his lab of contaminants. He bought Pyrex glassware, scoured it, dunked it in hot baths of potassium hydroxide, and rinsed it with double-distilled water. He mopped and vacuumed, dropping to his hands and knees to buff out any traces of lead from the floor. He covered his work surfaces with Parafilm and installed extra air pumps in his lab’s fume hood—he even built a plastic cage around it to prevent airborne lead from hitchhiking on dust. He wore a mask and gown and would later cloak his body in plastic.

The intensity of these measures was unusual for the time. It would be another decade before the laminar-flow “Ultra Clean Lab” (the grandfather of the antiseptic, high-security, air-locked laboratory you see in sci-fi movies) would be patented. Patterson's contemporaries simply didn’t know that approximately 3 million microscopic particles floated around the typical lab, each particle a barrier obstructing The Truth.

Five years would pass before Patterson finally perfected his own ultraclean techniques. In 1951, he managed to prepare a totally uncontaminated lead sample and confirmed the age of a billion-year-old hunk of granite, an accomplishment that earned him a Ph.D. The next step was to use the same procedure to find the age of the Earth. Funding was all that stood in his way.

Patterson applied for a grant through the U.S. Atomic Energy Commission, but the AEC rejected the proposal, prompting Harrison Brown to step in and rewrite it, inflating the language to make false—but profitable—promises: Patterson's work, he claimed, could help the commission develop uranium fuel.

As Patterson recalled, “He was telling them fibs, actually.” But the lies worked. Patterson got the money, and he eventually followed Brown west to start a new job at the California Institute of Technology.

At Caltech, Patterson built the cleanest laboratory in the world. He tore out lead pipes in the geology building and re-wired the walls (lead solder coated the old wires). He installed an airflow system to pump in purified, pressurized air and built separate rooms for grinding rocks, washing samples, purifying water, and analysis. The geology department funded the overhaul by selling its fossil collection.

Patterson knighted himself the kingpin of clean. “You know Pigpen, in Charlie Brown’s comic, where stuff is coming out all over the place?” he told Cohen. “That’s what people look like with respect to lead. Everyone. The lead from your hair, when you walk into a super-clean laboratory like mine, will contaminate the whole damn laboratory. Just from your hair.”

By 1953, the ultraclean lab was ready. As Patterson prepared the sample that would help him find the age of the Earth, he became increasingly prickly. He demanded that his assistants scrub the floor with small wipes daily. Later, he’d ban street clothes and require his assistants to wear Tyvek suits (scientific onesies).

When the sample was ready, Patterson traveled to the Argonne National Laboratory to use their mass spectrometer. Late one night, the machine spat out numbers. Patterson, alone in the lab, plugged them into Brown’s old equation: The Earth was 4.5 billion years old.

Overcome with glee, Patterson sped to his parents' home in Iowa. Instead of cutting a cake in celebration, his parents rushed him to the emergency room, convinced their overexcited son was having a heart attack.

In 1956, Patterson published his number in Geochimica et Cosmochimica Acta [PDF]. Critics bristled. “I had some of the best, most able critics in the world trying to destroy my number,” he said. Each time they tried to prove it wrong, they failed. At one point, an evangelist knocked on Patterson’s door to kindly inform him that he was going to Hell.

Discovering the age of the Earth was one of the greatest scientific accomplishments of the 20th century, yet Patterson couldn’t kick back and relish it. Lead contamination, he learned, was ubiquitous, and nobody else knew it. He was clueless as to where the lead originated. All he knew was that every scientist in the world studying the metal—from the lead in space rocks to the lead in a human body—must be publishing bad numbers.

That included Robert Kehoe.

After the two deaths in Dayton in 1923, Kehoe became one of the first people in the chemical industry to propose standard workplace safety measures. He stressed that employees needed to be trained before they handled dangerous chemicals. He vouched for improving the ventilation in plants. He tracked the health of workers. He saved lives, and ultimately, the profits to be made off leaded gasoline.

After the disaster in New Jersey, as critics questioned the safety of car exhaust, Kehoe scoffed. “When a material is found to be of this importance for the conservation of fuel and for increasing the efficiency of the automobile, it is not a thing which may be thrown into the discard on basis of opinion,” he said at the conference with the Surgeon General. “It is a thing which should be treated solely on the basis of facts.” The government agreed, and it deferred the expense of future studies to “the industry most concerned.”

In other words, “The research that might discover an actual hazard from tetraethyl lead was in Kehoe’s hand,” write Benjamin Ross and Steven Amter in The Polluters. Kehoe’s lab held a near monopoly on lead poisoning research. The Ethyl Corporation, General Motors, DuPont, and other gas giants bankrolled his research to the tune of a $100,000 salary (about $1.4 million today).

Kehoe’s contract stipulated that, before publishing, each manuscript had to be “submitted to the Donor for criticisms and suggestions.” In other words, as Devra Davis writes in The Secret History of the War on Cancer, “the same businesses that produced the materials Kehoe tested also decided what findings could and could not be made public.” It was a colossal conflict of interest.

Kehoe played along. When data threatened his client’s bottom line, the study gathered cobwebs. During World War II, Kehoe visited Germany with the U.S. military and discovered reports that the chemical benzidine caused bladder cancer. This was an issue—his client, DuPont, made benzidine. But rather than alert American workers to the risk, Kehoe stuffed the report in a box. The moldy records were unearthed decades later when DuPont’s employees, stricken with cancer, sued.

Kehoe also understood the dangers of lead paint. By the early 1940s, many European countries had already banned it, and even Kehoe worried about it in his personal letters, yet, when the American Journal of Disease in Children sounded sirens that lead paint harmed children, Kehoe didn’t use his starpower to stop the Lead Industries Association from suggesting that afflicted kids were “sub-normal to start with.”

Kehoe also made mistakes that might have been caught had his work been subject to independent scrutiny. In one study, Kehoe measured the blood of factory workers who regularly handled tetraethyl lead and those who did not. Blood-lead levels were high in both groups. Rather than conclude that both groups were poisoned by the lead in the factory’s air, Kehoe concluded that lead was a natural part of the bloodstream, like iron. This mistake would grow into an unshakeable industry talking point.

Kehoe's research also led him to wrongly believe that a quantifiable threshold for lead poisoning existed. In his view, the toxin was harmless as long as a person’s blood contained less than 80 micrograms per deciliter (μg/dL) of lead. Somebody with a blood lead level of 81 μg/dL? Poisoned. Somebody with a blood-lead level of 79 μg/dL? At risk, but fine.

That’s not how lead poisoning behaves. It’s not a you-have-it-or-you-don’t illness. It’s a matter of degree. You can be barely poisoned, slightly poisoned, mildly poisoned, moderately poisoned, significantly poisoned, extremely poisoned, fatally poisoned. A lot of damage can occur before you hit the 80 μg/dL benchmark. (For reference, the CDC today shows concern if blood-lead levels exceed 5 μg/dL.)

Kehoe’s two errors—that lead is natural to the human body, and that a poisoning threshold existed—were folded into policy and understood by the industry, government regulators, the press, and the public as gospel. To millions of people, Kehoe’s discoveries were “the facts.” He was awarded positions such as President of the American Academy of Occupational Medicine; Director of the Industrial Medical Association; President of the American Industrial Hygiene Association; and Vice Chairman of the Council of Industrial Health for the American Medical Association, among countless other seats. Kehoe was held in such high esteem, the journal Archives of Environmental Health dedicated an issue in his honor.

And he had lead all wrong.

Green in the face and clutching his stomach, Clair Patterson hung over the boat’s railing as his breakfast reintroduced itself.

After determining the age of the Earth in 1953, Patterson set out to answer a new riddle: How did Earth’s crust form? He knew studying lead in ocean sediments could provide the answer, so he aimed his sights on the sea. But a sailor’s life was not for him. As he recalled, “I got sicker than a dog! I didn’t know what the hell I was doing. I hated it!”

Again, a fib courtesy of Harrison Brown subsidized Patterson’s research. He had pitched the idea to the petroleum industry with the false promise that drilling for ancient sand could benefit oil companies. “Harrison got money from them every year, huge amounts, to fund the operation of my laboratory, which had nothing whatsoever to do with oil in any way, shape, or form,” Patterson later said.

With the American Petroleum Institute’s dollar, Patterson collected samples of sediment and water columns in the Pacific Ocean, off Los Angeles; the central Atlantic, near Cape Cod; the Sargasso Sea, near Bermuda; and the Mediterranean.

Patterson knew that if he compared the lead levels in shallow and deep water, he could calculate how oceanic lead has changed over time. Recently deposited by rain storms and rivers, water churning near the sea’s surface is younger than water that has sunk to the seafloor. The same strategy applied to sediment. Sand resting atop the seafloor is relatively new, but sediment buried 40 feet below is older. In geology circles, it’s called the Law of Superposition: the deeper the strata, the older.

Patterson collected samples from all depths and returned to his ultraclean lab. “Then a very bad thing happened,” he recalled. He found that the samples of young water contained about 20 times more lead.

This was not normal.

Mining the literature for an explanation, Patterson stumbled on data about leaded gasoline. The numbers correlated. “It could easily be accounted for by the amount of lead that was put into gasoline and burned and put in the atmosphere,” he later explained.

With oil companies financing Patterson’s work, he couldn’t help but think, We’re in serious trouble. Then he published the numbers anyway.

Over the previous nine years, the oil industry had awarded Patterson about $200,000. But the minute he published a paper in Nature blaming the industry for abnormal lead concentrations in snow and sea water, the American Petroleum Institute rescinded its funding. Then his contract with the Public Health Service dissolved. At Caltech, a member of the board of trustees—an oil executive whose company peddled tetraethyl lead—called the university president and demanded they shut Patterson up.

One day, the petroleum industry knocked on Patterson's door. The four oil executives (or, as Patterson termed them, “white shirts and ties”) acted friendly. They showed him a résumé of ongoing projects and wondered if he’d like money to study something new. “[They tried to] buy me out through research support that would yield results favorable to their cause,” Patterson remembered. Instead of shooing the suits away, Patterson asked them to sit before a lectern as he explained, bluntly, “how some future scientists would obtain explicit data showing how their operations were poisoning the environment and people with lead. I explained how this information would be used in the future to shut down their operations.”

After the free lecture, the men left. Later, Patterson would learn that the industry had asked the Atomic Energy Commission to stop subsidizing his work. “They went around and tried to block all my funding,” he recalled.

Denworth's book Toxic Truth details how the industry attempted to paint Patterson as a nutjob—which, in fairness, was not difficult. Patterson was eccentric. On smoggy Pasadena days, he’d amble across the quad wearing two different colored socks and a gas mask. He went distance running when distance running was a hobby for weirdos. He didn’t look or act like a professor. He wore t-shirts, khakis, and desert boots. He refused tenure. Later in his career, he soundproofed his Caltech office and installed two doors, two layers of walls, and two ceilings. As his colleague Thomas Church noted, Patterson was like his rock samples: He did not enjoy being "contaminated" by outside influences.

Kook or not, Patterson’s work attracted Katharine Boucot, editor of Archives of Environmental Health, who asked him to write about oceanic lead. Patterson submitted an essay singed with fire and brimstone that listed all of the possible natural causes for the lead surge: volcanoes, forest fires, soils, sea salt aerosols, even meteorite smoke. He showed his math and explained bluntly that these phenomena could not explain the lead boom. The numbers only added up when he accounted for lead smelting, lead-based pesticides, lead pipes, and “lead alkyls”—that is, gasoline.

His conclusion was dire. The human body probably contained 100 times more lead than natural. “Man himself is severely contaminated,” Patterson said.

Kehoe was asked to peer-review the paper. His response: Patterson's entire line of reasoning was laughable. He was a geologist and a physicist. What did he know about biology?

“The inferences as to the natural human body burdens of lead, are, I think, remarkably naive,” Kehoe wrote. “It is an example of how wrong one can be in his biological postulates and conclusions, when he steps into this field, of which he is so woefully ignorant and so lacking in any concepts of the depths of his ignorance, that he is not even cautious in drawing sweeping conclusions.”

Kehoe could have spiked the paper—he was, after all, lead’s foremost authority—but he greenlighted it anyway, believing publication would destroy Patterson’s credibility. “The issue which he has raised, in this article and by word of mouth elsewhere, cannot be ‘swept under the rug,'” he wrote. “It must be faced and demolished, and therefore, I welcome its ‘public appearance.'”

In 1965, toxicologists lambasted Patterson’s paper. The overarching tenor was stick to rocks and leave the human body to the experts. “Accepted medical evidence proves conclusively that lead in the environment presents no threat to public health,” a statement from the American Petroleum Institute pronounced. Herbert Stockinger, a toxicologist in Cincinnati, complained, “Is Patterson trying to be a second Rachel Carson? Let us hope that this article will prove to be the first and the last on science fiction.”

Patterson was undeterred. His saving grace was a blend of old fashioned stubbornness and a hearty conviction that science, whether accepted by the majority or not, was a gateway to truth. The only way to win over skeptics, he figured, was to do more research. To do that, he’d have to visit the coldest places on the planet. Arctic winds beckoned.

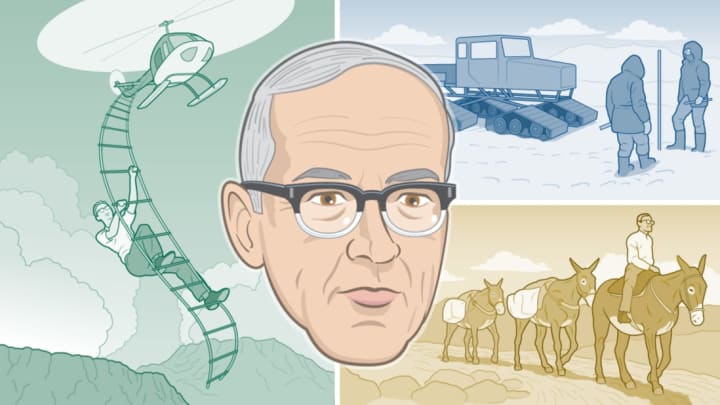

In the summer of 1964, a helicopter dumped Patterson off at the U.S. Arctic Research Center at Camp Century, Greenland. The camp looked sleepy from the air. A blanket of snow littered with oil drums and caterpillar tractors. But about 20 feet below the ice sheet, hundreds of soldiers buzzed in a labyrinth of tunnels that included, along with a theater, library, and post office, several secret annexes. The military called the camp a “polar research station,” but it was also ground zero for Project Iceworm, a secret (and failed) 2500-mile network of tunnels intended to store, and launch, nuclear missiles.

Patterson was through with bombs. He came to dig for giant ice cubes.

In the arctic, snow acts like sediment. Old snow rests deep under your feet while younger snow settles on top of it. Anyone who digs deep enough can effectively dig back in time. Patterson wanted to compare the lead in ancient ice to new ice and needed to excavate about 100 gallons of it.

Each night, as the soldiers slept, Patterson’s team descended into a sloping ice tunnel a few hundred feet below the surface. At this depth, the snow was 300 years old. The crew wore suits and gloves cleaned in acid. Using acid-washed saws, they slowly cut 2-foot cubes of ice, placed them in giant acid-washed plastic containers, and lugged them out of the tunnel to a plastic-lined trailer at the surface. The ice was melted, placed on military cargo planes, and flown to a lab in California.

While the base was excellent for dredging up ancient ice—they collected samples as old as 2800 years—the surface was too polluted. So, to find pristine new deposits of ice, Patterson and a group of soldiers crammed into three snow tractors and plowed through a storm. Cascades of snow gobbled the sun, and Patterson, who fruitlessly attempted to navigate with a sun compass, had to mark their tracks by stopping and planting a flag every couple feet. After reaching a desolate snowy plain, they dug a trench 50 feet deep and 300 feet long.

A year later, Patterson relived the episode in Antarctica. With summer temperatures dipping to 10 degrees below zero, his team, shrouded in clear plastic suits, revved electric chain saws and dug tunnels into the snow, 300 feet long and 140 feet deep. They gathered samples from 10 distinct eras. As one member later recalled in Toxic Truth, "It drove Pat nuts that everybody's nose dripped, as it does in the cold. The worry was an unnoticed drip would fall on a block. If your nose did drip, we would take tools and chip a few inches around the spot where it fell."

To harvest younger snow, the team steered a Sno-Cat tractor to an untouched patch of ice 130 miles upwind of their base. “We were forced to knuckle down to the pick, the shovel, and the man-haul, and dig an inclined shaft 100 feet long to provide access to the snow layers that were to be sampled,” Patterson wrote. “One member of the party, in bitter contemplation, calculated that we hoisted nearly 1000 banana boat loads of ice up and out of that slanting hell-hole.”

Back in California, Patterson developed stringent protocols to avoid contamination. It could take days to analyze just one sample. He made researchers wrap their bodies in acid-washed polyethylene bags. Each new sample was handled with a new pair of acid-cleaned gloves. (Years later, when Patterson analyzed more ice cores from Antarctica, he pointed to a spot on an ice sample and told his assistant, Russ Flegal, it was older than Jesus. In the retrospective book Clean Hands, Flegal recalls, “He then told me that if I dropped the core it would be sacrilegious, and that I would be banished from his laboratory for life.”)

The numbers out of Greenland stupefied. The samples showed a “200- or 300-fold increase” in lead from the 1700s to present day. But the most startling jump had occurred in the last three decades.

Talk about smoking guns: Lead contamination had rocketed as car ownership—and gasoline consumption—boomed in North America. By more than 300 percent.

Patterson received a bigger surprise, however, when he surveyed the oldest ice samples. The ice from the 1750s wasn’t pure either. Neither was ice from the year 100 BCE.

Lead pollution was as old as civilization itself.

5420762754001

Data as reported in Murozumi, Chow, and Patterson's paper in Geochimica et Cosmochimica Acta. Graph as represented in Clean Hands. Video credit: Sarah Turbin.

The Copper Age.

The Bronze Age. The Iron Age. The great periods of early human progress, stretching from Neolithic times to the advent of writing, are named for metals, the ores that ancient people used to make tools, weapons, pottery, and currency—the glinting sparks of civilization. It’s odd, however, that lead hasn’t forged its name in the history books. Humans have relied on it for millennia.

About 6000 years ago, humans discovered they could extract silver by smelting lead from sulfide ores. Ancient Mesopotamians and Egyptians, and, later, the Chinese used lead to toughen glass. From the Babylonians onward, people glazed pottery with lead. With its low melting point, the soft and malleable metal was a metallurgy miracle.

The concept of money—silver coinage in particular—would pump the first substantial loads of lead into Earth’s atmosphere. Lead was a 300-to-1 byproduct of silver during the heydays of Grecian mining. In a study published in Science, Patterson argued that lead and silver mining stimulated “the development of Greek civilization."

But it also polluted the atmosphere. And nobody noticed. After Rome took over Greece’s mines, the only pollution the Greek historian Strabo could see was an infestation of “greedy Italians.”

Rome mined lead wherever the Empire could stretch its tentacles—Macedonia, North Africa, Spain, Great Britain—and used the metal for cosmetics, medicines, cisterns, coffins, containers, coins, medals, sling bullets, ornaments. They even used lead acetate, or “sugar of lead,” to sweeten wine.

Between 700 BCE and the height of Roman power, around year 0, humans produced 80,000 tons of lead a year. Patterson wrote that “This occurrence marks the oldest large-scale hemispheric pollution ever reported, long before the onset of the Industrial Revolution."

Ancient people quickly learned that lead was a menace to health. In the first century, Pliny the Elder complained that quaffing lead-sweetened wine caused “paralytic hands.” The Greek medic Dioscorides agreed, describing leaded spirits as “most hurtful to the nerves.”

Unfortunately, few Roman citizens fully grasped the perils of lead poisoning because most people sweating in lead mines were slaves. Working 12-hour days, Roman slave miners dug pits up to 650 feet deep and extracted the metal by setting seams of rock ablaze. Pliny suspected the smoke ravaged their lungs: “While it is being melted, the breathing passages should be protected,” he warned, “otherwise the noxious and deadly vapor of the lead furnace is inhaled; it is hurtful to dogs with special rapidity.” Miners shielded themselves from lead vapors by covering their mouths with the bladders of animals.

Rome’s lust for lead grew with time. In fact, the Eternal City became so swamped in the metal that it forbade the use of lead as currency. Instead, lead was set aside for admission tickets to the circus and theater—and, of course, the city’s hydro-engineering projects.

Lead pipes connected Roman homes, baths, and towns with a glorious network of water. According to Lloyd B. Tepper, writing in the Journal of the Society for Industrial Archeology, the Romans mined 18 million tons of lead between 200 BCE and 500 CE, much of it for pipes. All this time, they were aware of lead’s dangers. The Roman architect Vitruvius begged officials to use terracotta instead. "Water," he plead, should “on no account be conducted in leaden pipes if we are desirous that it should be wholesome.”

Rome did not listen. And then it collapsed. “The uses of lead were so extensive that lead poisoning, plumbism, has sometimes been given as one of the causes of the degeneracy of Roman citizens,” writes Jean David C. Boulakia in the American Journal of Archaeology [PDF]. “Perhaps, after contributing to the rise of the Empire, lead helped to precipitate its fall.”

Ancient ice tells us that, after Rome fell, lead pollution dipped and flatlined until the late 10th century, when silver mines opened near modern Germany, Austria, and the Czech Republic. Lead levels sank again in the 1300s as the Black Death killed 30 percent of Europe’s population but resurged as western society recovered.

In 1498, the Pope banned the practice of adulterating wine with lead. The decree was largely symbolic. At that point, lead was pervasive. It was even in cosmetics. Vannoccio Biringuccio, an Italian metallurgist, observed in his 1540 De La Pirotechnia that “Women in particular are greatly indebted [to white lead], for, with art, it disposes a certain whiteness, which, giving them a mask, covers all their obvious and natural darkness, and in this way deceives the simple sight of men by making dark women white and hideous ones, if not beautiful, at least less ugly.” (Some charmer.)

Intellectuals continued ringing alarms, but nobody took heed. Instead, entire buildings were constructed devoted to the production of lead. European skylines were punctuated by shot towers, where molten lead slithered down ramps to form bullets. Louis Tanquerel des Planches, a French physician, remarked that shotmakers suffered from “lead colic.”

In colonial America, Benjamin Franklin noticed that printers—who depended on lead as a type metal—suffered from the same “paralytic hands” Pliny the Elder observed centuries earlier. Franklin also mentioned that, in 1786, North Carolinians complained that lead-distilled rum from New England caused “dry belly-ache with a loss of the use of their limbs.”

Like Rome, British and early American cities opted to flush their municipal water through lead pipes. In lead-loving New England, infant mortality and stillbirths were 50 percent more common than locales that used another metal. People knew lead was responsible. In England, a pathologist named Arthur Hall recommended that any woman who needed an abortion should just drink the tap water. On the black market, lead was the main ingredient in abortion pills.

In the 20th century, lead paint was marketed as a replacement for wallpaper. The Dutch Boy Paint Company, the dominant lead paint manufacturer, targeted children by selling paint coloring books with jingles: “This famous Dutch Boy Lead of mine can make this playroom fairly shine!” In one book, the Dutch Boy Lead Party, a boy—a member of the “Lead family"—carries a paint bucket and frolics with a pair of anthropomorphic shoes who sing,

You know when we were moulded the man who made us said. We’re strong and tough and lively because in us there’s lead.

In 1923, the National Lead Company bought ads in National Geographic exclaiming “Lead helps to guard your health!” That same year, Thomas Midgley Jr. and Charles Kettering added lead to gasoline.

Men died. Hospitals filled. And people still vouched for the metal's safety. In the 1930s, a lead advocacy group proudly claimed, “In many cities, we have successfully opposed ordinance or regulation revisions which would have reduced or eliminated the use of lead.”

Between 1940 and 1960, as public health experts David Rosner and Gerald Markowitz write in Lead Wars, the amount of lead produced for American gas tanks increased eightfold.

By 1963, nearly 83 million Americans owned a car.

It was 1966, and Robert Kehoe sat before the Subcommittee on Air and Water Pollution in Washington, D.C. and felt the gaze. He had come to offer his expertise on airborne lead. He had testified before dozens of committees in his career and, for decades, had been revered by a revolving door of policymakers. This time was different.

A year earlier, the U.S. Public Health Service had held a symposium to discuss the risks of leaded gasoline. Forty years had passed since the government had last called such a meeting, but America was in the midst of an environmental awakening. Rachel Carson’s 1962 book Silent Spring uncorked a bombshell condemning the pesticide DDT as a carcinogen. Secretary of the Interior Stewart Udall had published The Quiet Crisis, a rallying cry for conservationists. Mounting medical evidence showed that low levels of lead—far below Kehoe’s 80 μg/dl threshold—could harm children. And Patterson’s research had reignited the debate over car exhaust.

At the symposium, Kehoe recited his canned talking points: There is a threshold for poisoning. The body has adapted to lead in the environment naturally. But this time, Kehoe's feet were held to the fire. Harry Heimann, of Harvard’s School of Public Health, griped, “[It’s] extremely unusual in medical research that there is only one small group and one place in a country in which research in a specific area of knowledge is exclusively done.” Kehoe appeared surprised. “I seem to be a bit under the gun,” he said.

The next year, as Kehoe sat in the Senate Office Building, he faced a panel of skeptical legislators, including the committee chairman, Edmund Muskie. Imposing and plainspoken, Muskie became a champion of environmental causes after he learned that polluted rivers in his home state of Maine had prevented new businesses from putting down roots. As chairman, he had the power to suggest amendments to the newly-established Clean Air Act. He invited 16 experts to Washington, including Kehoe and a D.C. newcomer: Clair Patterson.

Kehoe bristled at the thought of having to explain his life’s work to a panel of lawyers. “I’m afraid we would be here the rest of the week if I were to undertake to do this,” he said.

With that, the cross examination began.

Muskie: “Does medical opinion agree that there are no harmful effects and results from lead ingestion below the level of lead poisoning?” Kehoe: “I don’t think that many people would be as certain as I am at this point.” Muskie: “But you are certain?” Kehoe: “It so happens that I have more experience in this field than anybody else alive.” ...Muskie: “It is your conclusion that in 1937, to the present time, on the basis of that data, that there has been no increase in the amount of lead taken in from the atmosphere by traffic policemen, by attendants at service stations or by the average motorist?” Kehoe: “There is not the slightest evidence that there has been a change in this picture during this period of time. Not the slightest.”

One week later, Patterson testified. With characteristic bluntness, he called Kehoe’s lead poisoning “threshold” a fantasy. He torched the Public Health Service for trusting numbers supplied by the industry, calling it “a direct abrogation in violation of the duties and responsibilities of those public health organizations.”

Besides, their numbers were wrong. “The same contamination problem that prevented Patterson from dating the Earth for many years also kept scientists, unknowingly, from measuring accurate concentrations of lead,” Cliff Davidson writes in Clean Hands. “There were plenty of values reported in the scientific literature, but they were mostly wrong.”

Patterson explained that cars puffed millions of tons of lead into the air each year, and the public was likely getting sick so slowly that nobody had noticed. Inaccurate data, in other words, was poisoning people.

Then he aimed for Kehoe’s arguments.

Patterson knew that natural levels were lower than what Kehoe believed. He had seen the evidence in “200-year-old snow, 400-year-old snow, 4000-year-old snow.” Scientists and policymakers needed a vocabulary lesson. The lead in a modern American's body was typical—that is, common—but hardly “natural.”

Muskie: Now why has [the distinction between typical and natural lead] not been attempted by these organizations or by others than yourself in studying this problem? It seems such a logical approach to a lawyer.” Patterson: “Not if your purpose is to sell lead.” Muskie: “Well, I don’t think it is the purpose of the Public Health Service to sell lead.” Patterson: “That is why it is difficult to understand why the Public Health Service cooperated with the lead industry...”

The hearings did not make an immediate splash. But Patterson’s testimony would influence the Clean Air Act of 1970, which granted the EPA authority to regulate additives in fuel—lead included. “The hearings established a new premise: that lead poisoning was not only a florid disease of workers, it could be an insidious, silent danger,” Dr. Herbert Needleman writes in Public Health.

But Patterson was still a fringe firebrand, and the EPA appeared to not take his complaints about industry influence seriously. In 1970, the agency, looking to establish regulations, asked the National Academy of Sciences to assemble a team of experts to write a report. The academy stacked the lineup with industry consultants, including Kehoe, and scientists with zero expertise in airborne lead. Patterson was not invited. Their report, released in 1971, ignored his research.

Patterson’s jugular throbbed. “Lawyers are not scientists and neither are government bureaucrats—and when the bureaucrats are elected by people, the majority of whom believe in astrology and do not believe in evolution, then this sort of thing can be expected,” he wrote in a letter to Harrison Brown.

Thankfully, a growing number of experts were on Patterson's wavelength. Doctors at the EPA investigating the effects of lead on children had discovered that not only do kids absorb five times more lead than adults, they're also more likely to suffer neurological problems from airborne lead exposure, too. The physicians consulted Patterson’s work, but they danced around printing his name. He remained too controversial.

In 1972, the EPA erred on the side of caution and proposed regulations requiring the lead in gasoline be reduced, step by step, 60 to 65 percent by 1977.

The lead industry and Patterson were equally furious. Lead interests called the phase-down extreme. Patterson fumed that it was too conservative. What don’t these people understand? He thought. Lead is a known toxin. It’s in our air. Eighty-eight percent of it comes from car exhaust. It harms the brains of children. We must remove ALL of it!

When experts pooh-poohed Patterson’s fears as unrealistic and radical, the scientist returned to the field. There was more work to do.

In a far-flung tract of Yosemite National Park, the air thick with mosquitoes, Patterson began the work that would quiet his critics. Miles north of the fanny packs of Yosemite Valley, Thompson Canyon is ringed by white granite mountains and crystalline streams. Throughout the 1970s, Patterson’s crew rode pack animals and hiked to this high country. During winter, they slogged up the mountain on skis and snowshoes.

“We chose the top of a mountain,” Patterson explained, “because that’s the last place man has gone to pollute.” In other words, the perfect place to test a theory.

Not all lead in the environment is unnatural. Plants can naturally absorb the metal from rocks and rainwater. When herbivores consume these plants, they too will take up some of this lead. The same goes for any carnivore that eats these herbivores, and so on. Patterson hypothesized, however, that under normal circumstances these organisms would naturally filter some lead out. In other words, lead should decrease as you climb up the food chain. He called this process "biopurification" and figured that if lead levels increased (or stayed the same) as you scaled the local food chain, then something abnormal must be stirring the metal in.

The team tested everything imaginable: air, rain, stream water, groundwater, rocks, snowmelt, sedge, grass, and topsoil. They even trapped meadow mice and pine martens, a species of weasel.

If Patterson had any remaining tolerance for sloppiness, it evaporated. One colleague would describe him as “intense x 10^3.” The team collected air samples with vacuum filters and carefully hiked them down the mountain. In the lab, assistants handled samples with acid-cleaned tweezers. “It’s really bad if you lift up the filter with tweezers and drop it onto the counter or anywhere,” Cliff Davidson told Denworth in Toxic Truth. “That means the two weeks you spent camping in Yosemite were wasted at least for that sample. You get very paranoid.”

Four years later, the results showed that lead had spiked along the food chain. Patterson’s team had found the fingerprint: 95 percent of the lead had drifted from car exhaust in San Francisco and Los Angeles, nearly 300 miles away [PDF].

If one of the most remote places in California was this polluted with urban lead, Patterson could only imagine how bad the lead pollution must be in cities. Especially in the bodies of those who lived there.

For years, Patterson believed the human body contained 100 times more lead than nature intended, but the Yosemite numbers painted a bleaker picture. “It seems probable that persons polluted with amounts of lead that are at least 400 times higher than natural levels … are being adversely affected by loss of mental acuity and irrationality,” Patterson wrote. “This would apply to most people in the United States.”

During a later study, that picture worsened. Patterson obtained the skeletal remains of ancient Peruvians (up to 4500 years old) and an ancient Egyptian mummy (2200 years old). He even visited medical repositories and obtained the cadavers of two modern Americans and one British person. “We got bodies, and we took out their teeth, we took out segments from their arm balls and segments from their ribs, men and women,” he said.

The human skeleton is a 206-piece lead bank. About 95 percent of your body’s lead is stored in bones. Patterson knew that if he compared the ratio of lead to calcium in bones, he could see how polluted modern Americans were. The results:

The modern American contained nearly 600 times more lead than his or her ancestors.

Before the phase-down of leaded gasoline could begin, the EPA had to hear arguments for and against the regulation. In March 1972, as Patterson crunched numbers on his Yosemite study, the agency held a hearing in Los Angeles. Ethyl arrived with a strategy to delay the phase-down as long as possible.

Typically, speakers filed their statements to the EPA one day before a hearing. The Ethyl Corporation, however, had prepared a sneaky workaround. The company submitted a draft and notified the EPA that Larry Blanchard, Ethyl’s executive vice president, was still tweaking the final copy. It was true; Blanchard had edits. But the additions—a jumble of studies favoring Ethyl’s cause—caught the EPA panel off guard.

“There is absolutely no health justification for such a regulation,” Blanchard railed. He argued that the government had conflated the dangers of lead paint with tetraethyl lead, in what he called a “lead herring.” Tetraethyl lead had saved the American economy billions. It made the modern automobile, the entire car-centric structure of American life, possible. A phase-down would emasculate car engines, cause octane numbers to plummet, and waste crude oil. They might as well burn the money of the American people.

Blanchard’s testimony impressed. Joined by a chorus of other lead interests, he sowed enough doubt that the EPA agreed to review the evidence and postponed the phase-down by one year.

Ethyl needed all the time it could get: A new problem had emerged out of Detroit—the catalytic converter, a device invented to meet new carbon monoxide standards that were, to the industry's dismay, incompatible with leaded gasoline. With both the catalytic converter and the EPA regulations posing existential threats, Ethyl needed to buy time so it could focus on inventing a lead-friendly alternative to the converter.

To extend their stalling effort, Ethyl sued the EPA in 1973. They argued that the scientific opinion on leaded gasoline was far too hazy to enforce any regulations. They had a point. A tidal wave of studies contradicted Patterson’s work. Most labs, including government facilities, still had not adopted his ultraclean methods. Few could confirm his research.

In 1974, a Federal Appeals Court ruled 2-1 in Ethyl’s favor. The financial magazine Barron’s wagged a finger at the EPA, which, in its opinion, had “acted in irrational, unscientific, and arbitrary fashion. It had relied heavily on documents which seemed to support its claims and ignored others which effectively refuted them.”

The EPA, however, demanded a full review in the U.S. Court of Appeals. This time, any champagne Ethyl prepared stayed on ice. The EPA won, 5-4. “Man’s ability to alter his environment,” the court ruled, “has developed far more rapidly than his ability to foresee with certainty the effects of his alterations.”

Two shocking studies—each complementing Patterson’s research—swayed the court. Published in The Lancet and The New England Journal of Medicine, the papers showed that children with higher blood lead levels (between 40 to 68 μg/dL) had lower IQs. These numbers sat below Kehoe’s old poisoning threshold.

When lead companies attempted to bring the case to the Supreme Court, the high court refused. The lead—some of it, at least—had to go.

Blanchard seethed: "The whole proceeding against an industry that has made invaluable contributions to the American economy for more than fifty years is the worst example of fanaticism since the New England witch hunts in the Seventeenth Century." For over half a century, "no person has ever been found having an identifiable toxic effect from the amount of lead in the atmosphere today."

He would not be able to claim that much longer.

When the EPA regulations went live in 1976, lead in the atmosphere plummeted—just as Patterson had predicted.

The industry held out hopes that the results were a fluke. Daniel Vornberg, an industry executive, wrote, “The most difficult data to deal with will be a study which has been represented to show that children’s blood leads are dropping in strict correspondence to air lead decrease and gasoline phase down.”

That’s exactly what happened.

In 1983, an arm of the CDC showed a “one to one drop in blood lead with gasoline lead reduction,” according to Vornberg. When leaded gasoline sales decreased 50 percent, blood-lead levels had dropped 37 percent [PDF].

Today, experts know that a blood-lead level over 5 μg/dL can damage a child’s brain, increasing the risk of attention disorders, lowering IQs, affecting academic achievement, and delaying puberty. In the mid-1980s, the Agency for Toxic Substances estimated that nearly 17 percent of preschool kids had blood lead levels over 15 μg/dL. The problem was especially bad in urban black neighborhoods: About 55 percent of African-American children in cities had damaging amounts of lead in their blood.

Year after year, these numbers plunged.

Patterson refused to run victory laps. Lead, he predicted, “has contaminated our bodies, and will destroy lives in amounts that are almost too small to see…” He would never cease gathering new data until lead was eradicated entirely.

He returned to the sea, realizing that on his first voyage, he had overlooked his boat’s metal hull. The ship’s wake left a bubbly trail of lead contamination. This time, Patterson came better prepared and brought a rubber raft for collecting samples. Watching from a main vessel, Patterson blanched with seasickness. When they docked, an ambulance waited for him on shore. “Get the hell out of here,” Patterson told the medics. “We’ve got samples to analyze!”

The upper ocean layers, the numbers showed, were still riddled with industrial lead.

Patterson also fished for tuna and crammed frozen albacore into the refrigerators of Caltech’s geology building. (“Those of us with offices off that corridor, however, lived in fear of an extended power failure,” a colleague recalled.) Patterson compared the freshly caught albacore to canned tuna and discovered that the canned fish contained 1000 to 10,000 times more lead. The study hit mainstream news and prompted manufacturers to stop soldering tin food cans with lead.

In the 1980s, with the help of grants from the National Science Foundation, Patterson climbed Japan’s Hikada Mountains in search of pristine habitats. He tramped through the rainforest of American Samoa, the Marshall Islands, and New Zealand to measure ambient air and rainwater. Lead was there. Again, Patterson fingerprinted the source—tailpipes as close as Tokyo and as far away as Los Angeles.

When critics quibbled that volcanoes, not cars, were responsible for lead pollution, an aging Patterson was helicopter-dropped on the lip of volcanoes to take air samples. (In Hawaii, as his team stood on one volcano, a colleague set a backpack on the ground and watched it burst into flame.) The findings would absolve volcanoes of any wrongdoing. The lead spewing from eruptions couldn’t compete with that belched by vehicles.

By the mid-1980s, the lead industry, running out of arguments, resorted to denial. In a 1984 Senate testimony, Dr. Jerome Cole, President of the International Lead Zinc Research Organization, claimed “there is simply no evidence that anyone in the general public has been harmed from lead’s use as a gasoline additive” [PDF]. By that point, legislators were more apt to listen to Patterson. Once a kooky egghead, he had risen to become a mainstream scientific prophet. He was accepted into the National Academy of Science. He won the Tyler Prize, the greatest environmental science award. An asteroid was even named in his honor.

In 1986, the EPA called for a near ban of leaded gasoline. Four years later, the amended Clean Air Act required that any remaining leaded gasoline be removed from service stations by December 31, 1995.

Patterson would never see that day. Born months after leaded gasoline was discovered, he would die three weeks before lead shared its last kiss with America’s gas tanks. He was 73.

At Caltech, Clair Patterson developed the odd pastime of wandering campus in search of bird droppings. He’d collect excrement, bring it inside, and glue the droppings—of all different shades, shapes, and sizes—in artsy patterns to the side of his mass spectrometer. When Patterson’s assistants first noticed the machine mottled with dung, they scrambled to alert their boss, unaware that the graffiti was his.

Patterson’s artwork had a clear message: If crappy samples go in, crappy numbers will come out. A spectrometer is a marvelous, but limited, machine. It’s only as wise as the person operating it. For decades, experts had treated machines as “oracles of wisdom” instead of trusting their own intuition, and, as a result, a fog of mediocrity had settled over the field of lead studies. So, as Patterson’s colleague Thomas Church recalls, his students spent each day “confronted with this most foul visual desecration of their sacred samples.” The art didn’t distort their results, but it did hammer home the lesson that, “Wisdom came, if and when it did, from humans.”

“I’m a little child,” Patterson would say. “You know the emperor’s new clothes? I can see the naked emperor, just because I’m a little child-minded person. I’m not smart. I mean, good scientists are like that. They have the minds of children, to see through all this façade.”

For decades, most experts rejected Patterson’s work because they carelessly tested corrupted samples and could not verify his data. In other words, they failed to see through the façade. When Patterson was finally accepted into the National Academy of Science in 1987, his colleague at Caltech, Barclay Kamb, summed his career up nicely: "His thinking and imagination are so far ahead of the times that he has often gone misunderstood and unappreciated for years, until his colleagues finally caught up and realized he was right."

By the early '90s, researchers who had written off Patterson as a cranky caricature of Mr. Clean eventually adopted his laboratory methods. Many of his students, fiercely loyal to both Patterson and his procedures, had spread the Good Word. “I went to work with him for what was supposed to be a six month postdoc, and remained associated with him for the next two decades,” his colleague Russ Flegal wrote in a remembrance. When Patterson died, Flegal tried calling everybody who knew him; it took more than three days. “There is not a ‘tree’ with environmental scientists branching off Patterson’s trunk,” Flegal wrote, “there is a forest.”

Today, contamination control is standard protocol in labs. As Flegal writes, “His sphere of influence is now so pervasive that most scientists who promulgate his ‘clean hands, dirty hands' protocols for handling environmental samples do not know the origins of those protocols, and many do not even known who Patterson was.” The scientific research that has resulted—from studies on mercury poisoning to work that uncracked the composition of the Apollo 11 moon rocks—is difficult to quantify.

Here’s what we can quantify. In the 1970s, lead in the atmosphere peaked to historic highs. It has since cratered to medieval levels. In the 1960s, drivers in more than a hundred countries used leaded gasoline. Today, that number is three. In 1975, the average American had a blood lead level of 15 μg/dL. Today, it’s 0.858 μg/dL [PDF]. A 2002 study in Environmental Health Perspectives found that, by the late 1990s, the IQ of the average preschooler had risen five points. Needleman writes, “The blood lead levels of today’s children are a testimony to his brilliance and integrity.”

Patterson was not one to bask in self-congratulation. He believed that all accomplishments were collective, and he deferred success to his predecessors and colleagues. “True scientific discovery renders the brain incapable, at such moments, of shouting victoriously to the world ‘Look at what I have done! Now I will reap rewards of recognition and wealth!'” Patterson wrote. “Instead, such discovery instinctively forces the brain to thunder, 'WE did it!'”