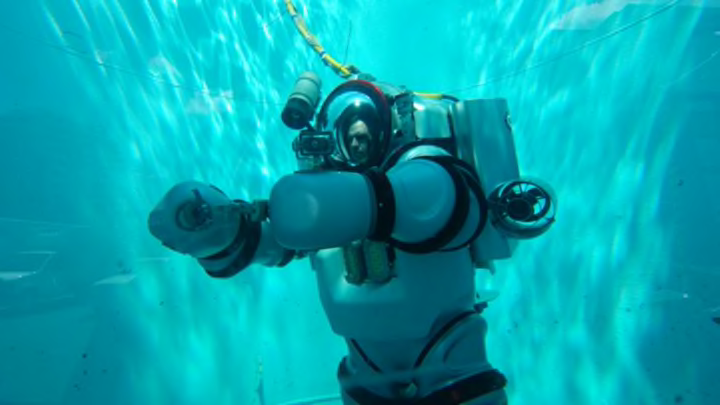

It looks like something you'd wear to visit the Moon or Mars, but the Exosuit—on display at the American Museum of Natural History's Milstein Hall of Ocean Life through March 5—is actually built to explore another place that's largely alien to humans: the ocean. The atmospheric diving system (ADS) is capable of taking a diver down to 1000 feet while keeping him at surface pressure. A hybridization of wet diving and submersibles, "it allows the human form to be embedded in an environment," says Michael Lombardi, AMNH's Dive Safety Officer and the project coordinator of the Stephen J. Barlow Expedition, which will take the suit out this July on its first mission to explore an area 100 miles of the coast of New England known as The Canyons. "People have dived to these depths just to say that they've done it," Lombardi says. "That's very different than doing it for work, which is what we're doing."

Click to enlarge

At 6.5 feet tall, the hard-metal suit is owned by the J.F. White Contracting Company and was designed and built by Nuytco Research Ltd.; it's currently the only Exosuit in existence. The suit—which can be modified to fit divers from 5'6" to 6'4" tall—is driven with four 1.6 horsepower foot-controlled thrusters and has 18 rotary joints in the arms and legs, which allow for a wide range of movement and give the diver the ability to use special accessories. Though it weighs between 500 and 600 pounds on land, it's nearly neutrally buoyant in the ocean.

On its July expedition to The Canyons (where the continental shelf drops off to depths of more than 10,000 feet), the suit will allow a team of scientists—including ichthyologists, neurologists, and marine biologists—to conduct studies in the mesopelagic (or mid-water) zone, where they can find a number of animals that have only been studied using remotely operated vehicles (ROVs) or after being caught in trawl nets. The mission will take place at night, because animals make a vertical migration from the depths to shallower water at that time. The team is looking to study creatures that exhibit bioluminescence (generating light using a chemical reaction). The discovery of green fluorescent protein in the '60s allowed scientists to reveal the inner working of cells in a non-invasive way, according to Vincent Pieribone, Yale University School of Medicine Professor and Chief Scientist of the Stephen J. Barlow Bluewater Expedition; identifying new bioluminescent proteins could potentially help in other areas of biomedical research, including cancer cell tagging.

Working in tandem with an ROV, the suit will be equipped with suction tools and a special containment vessel (still in development) that will allow the operator to gently capture fish and invertebrates and place them in front of the ROV's cameras to be photographed in high resolution. The suit is so dexterous that a user can pick up a dime off the floor of a pool—and it has to be, when working in areas where there might be 9000 feet of water below it. "If you drop something," Pieribone says, "that's a long way down." The Exosuit allows a diver to work for 4 to 5 hours on site, and is built to have 50 hours of emergency support.

The back of the Exosuit, which shows the life support system. Photo courtesy AMNH/Michael Lombardi.

The suit itself cost approximately $600,000 to make; add in instrumentation, and the total cost is somewhere around $1.3 million. In development for about 15 years, Lombardi said, the Exosuit is a "quantum leap forward" from the Newtsuit of the 1980s (which was also manufactured by Nuytco and is still used today).